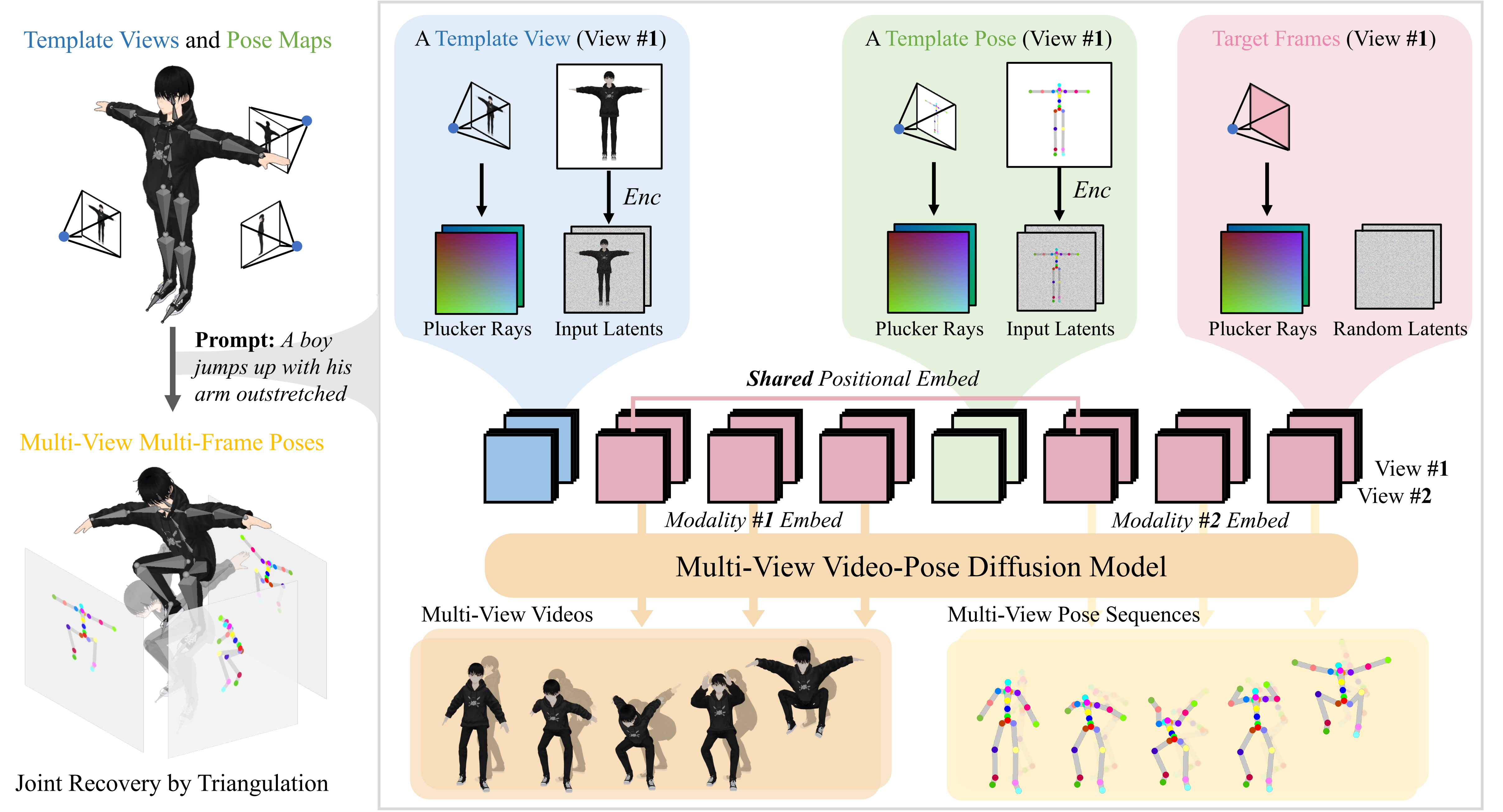

We present AnimaX, a feed-forward 3D animation framework that

bridges the motion priors of video diffusion models with the

controllable structure of skeleton-based animation. Traditional

motion synthesis methods are either restricted to fixed skeletal

topologies or require costly optimization in high-dimensional

deformation spaces. In contrast, AnimaX effectively transfers

video-based motion knowledge to the 3D domain, supporting

diverse articulated meshes with arbitrary skeletons. Our method

represents 3D motion as multi-view, multi-frame 2D pose maps,

and enables joint video-pose diffusion conditioned on template

renderings and a textual motion prompt. We introduce shared

positional encodings and modality-aware embeddings to ensure

spatial-temporal alignment between video and pose sequences,

effectively transferring video priors to motion generation task.

The resulting multi-view pose sequences are triangulated into 3D

joint positions and converted into mesh animation via inverse

kinematics. Trained on a newly curated dataset of 160,000 rigged

sequences, AnimaX achieves state-of-the-art results on VBench in

generalization, motion fidelity, and efficiency, offering a

scalable solution for category-agnostic 3D animation.

AnimaX animates an articulated 3D mesh in minutes. AnimaX has two

stages: (1) generating multi-view consistent videos and

corresponding pose sequences simultaneously, conditioned on rendered

template views and pose maps from the input mesh, with a textual

description; and (2) recovering 3D joint positions per frame using

multi-view triangulation and applying inverse kinematics to obtain

the joint angles and animate the mesh.

AnimaX animates an articulated 3D mesh in minutes. AnimaX has two

stages: (1) generating multi-view consistent videos and

corresponding pose sequences simultaneously, conditioned on rendered

template views and pose maps from the input mesh, with a textual

description; and (2) recovering 3D joint positions per frame using

multi-view triangulation and applying inverse kinematics to obtain

the joint angles and animate the mesh.